The universe shouldn’t work.

That’s not a philosophical position. It’s an engineering observation. The constants that govern how matter behaves (the strength of gravity, the mass of the electron, the rate at which the universe expands) are set to values so specific that adjusting any of them by a fraction of a percent collapses the whole structure. No stars. No chemistry. No time long enough for anything to happen. The tolerances are absurd.

Physicists call this fine-tuning. The name is polite. What they mean is: the odds against this universe are so extreme that “chance” stops being a useful word for it.

The Two Escapes That Aren’t

Two answers dominate the debate, and neither is as clean as its proponents pretend.

The first is design. Someone set the dials. The universe looks like it was built for life because it was built for life. The problem is that this explains nothing mechanically; it just relocates the question one level up. Who built the builder? And why does a universe designed for life consist of 99.9999% lethal vacuum, with life confined to a thin biological smear on one unremarkable rock?

The second is the multiverse. If you generate enough universes (some versions say 10 to the power of 500, which is a number that has stopped meaning anything), eventually one rolls the right numbers. We’re in that one because we couldn’t exist in any other. This is the Weak Anthropic Principle: we observe what permits our observation. True, trivially. It explains why we’re here without explaining what set the parameters. It also requires an infinite number of unobservable universes as its load-bearing assumption, which puts it in the same epistemic category as the thing it’s trying to replace.

Both positions treat the constants as prior: fixed before any observer arrives. That assumption is where the argument gets interesting.

The Observer Problem

Quantum mechanics has an unresolved problem at its core. A particle exists in a superposition of states until it’s measured. At the moment of measurement, it resolves to one outcome. The math is exact. The mechanism is unknown. Why does observation collapse probability into actuality?

Most physicists park this question and get on with the calculations. A few take it seriously as a clue about the nature of reality.

John Wheeler spent decades on it. His conclusion, which he called the Participatory Anthropic Principle, was that observers don’t just record the universe; they bring it into being. Not metaphorically. Retroactively, through the act of observation, the universe acquires a definite history. Without observers, there is no collapse, no resolution, no definite past. The universe requires participants to be real.

QBism (quantum Bayesianism) pushes this further. The wavefunction isn’t a property of the universe out there; it’s an agent’s belief-state about what they’ll find when they interact with it. Reality is co-created at the moment of contact between observer and system. There is no view from nowhere.

These aren’t fringe positions. They’re minority positions within a field where the majority hasn’t solved the measurement problem either. The standard interpretation (Copenhagen) essentially says: don’t ask. The wavefunction collapses when observed. Move on. That’s not an answer, it’s a professional courtesy.

What We’re Actually Arguing About

The fine-tuning debate has lasted this long because it isn’t really a physics debate. The physics is genuinely unresolved, but the heat comes from elsewhere. Fine-tuning is a mirror. It reflects whatever you bring to it: the theologian sees confirmation, the materialist sees a threat, the philosopher sees an infinite regress.

What it actually is, stripped of the freight, is an open question about the relationship between observers and the reality they observe. That question is at the center of quantum mechanics, unsolved after a century. Anyone who tells you they’ve answered it (with God, with the multiverse, with consciousness) is telling you more about themselves than about the universe.

The constants are what they are. The blueprints are still inside the control room. We’re standing outside, listening to the machinery run, and arguing about what the building is for.

The Inversion

I write sci-fi. I often start with a “what if” and build a universe from there: its physics, its rules, any departures from today’s starting point, then the situation, the characters, and let the story build itself. I discover the story a bit like you do when reading it.

Taking the above as a starting point: what if the constants aren’t set in advance? What if consciousness and cosmos co-emerge, and the tuning is the relationship, not the precondition?

The standard framing puts observers at the end of a long causal chain: universe forms, constants happen to permit chemistry, chemistry permits biology, biology permits minds. Fine-tuning is the mystery at step one.

The inversion says: the chain runs both ways. The universe doesn’t pre-tune for life. It and life arrive together, and the constants we measure are not prior constraints but the record of that co-emergence. We don’t observe a fine-tuned universe. We participate in one.

This has a strange implication for the Fermi paradox. That paradox asks, why, given a universe old enough and large enough to have produced intelligence a thousand times over, do we seem to be alone in our corner of it?

The standard answers are grim (they’re dead, they’re hiding, travel is impossible) or optimistic (we’re early, they’re out there, we haven’t looked hard enough). The inversion suggests something stranger: each consciousness-cluster tunes its local physics. Not deliberately, not by choice. By existing. The region of space we occupy is already, in some structural sense, spoken for. Other intelligences don’t fail to appear nearby because they never had a chance to evolve. This doesn’t rule out life; it says intelligence has an even higher evolutionary mountain to overcome. It can happen, but the positioning required is exquisite. Intelligence is separated by immense time and space by definition.

Light speed remains the speed limit of natural change (at the Planck foam level, tuning can propagate no faster). The observable universe is many billions of light years across. Other consciousness-centers can evolve concurrently (whatever “at the same time” means across cosmological scales) and as their influence zones expand and eventually overlap, they settle toward equilibrium. There’s no reason other intelligences haven’t evolved, won’t evolve. They just do so in ways that place them very far from each other. If they’re too orthogonal to reconcile, the incompatibility itself forces a fork: a separate universe instance where both can exist without contradiction.

The Soft Edge

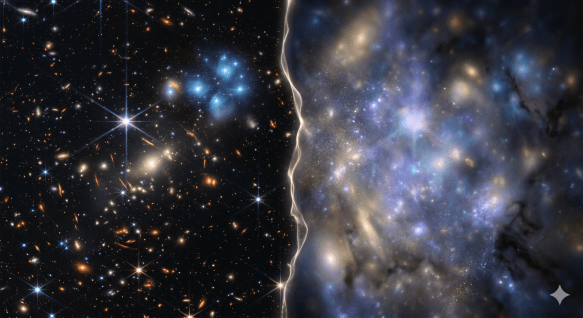

If the constants are the product of co-emergence rather than prior fixtures, they can’t be infinitely rigid. The consciousness-affected zone is vast, maybe millions of light years across, but we can see vastly further than that. The universe across its whole breadth had to have had us as a possibility from the first, so it will be largely coherent. But at the very edges, looking out into reaches beyond our tuned zone, we might see the occasional wobble against our expectations. We do see things we can’t explain. Maybe that’s one reason.

There also has to be some original malleability in the underlying structure. At the Planck length, if our theories are even close, that’s where it would live. Once set, some mechanism, some momentum, holds things together. What that is remains open. But the what-if builds from here: what if minds operate in a non-deterministic state because we evolved a mechanism that makes us more than puppets of causality? What if that mechanism could be amplified? Could the universe, at small scales, be nudged by will, by perception: a natural ability turned up just enough to matter?

The rules of physics we measure in our tuned pocket are local, not universal. Outside them, things are possible that our physics would classify as impossible. Not because the laws of nature have been broken. Because the laws in that region haven’t fully hardened.

This is what a rigorous theory of magic would look like. Not violation of physics. Physics that hasn’t finished resolving.

This isn’t a claim about how the universe works. It’s a coherent frame, reasonably consistent with what we don’t know about quantum measurement and observer participation, and more interesting than either of the standard escapes.

A novel I’m working on takes this seriously as a premise. It builds from the cosmology down to the plot: what happens when a civilization discovers this reality, and what it means that others in distant galaxies may have known it for a long time and have reason to worry about a new competitor.

The adventure and space opera come with the territory. But the physics is the foundation.

What if our physics is contingent rather than absolute?

If You Want to Pull This Thread

John Wheeler, “Information, Physics, Quantum: The Search for Links” (1989). The paper where Wheeler lays out “It from Bit” and the participatory universe. Dense, but the original argument in his own words.

David Deutsch, The Fabric of Reality (1997). Makes the case for the multiverse more rigorously than most popularizations, and is honest about what it costs philosophically. Good for understanding the strongest version of the position before deciding what you think of it.

Chris Fuchs on QBism. His papers are technical, but his interviews and lectures are accessible. Search “QBism Fuchs” and find a talk. The core idea (that the wavefunction is an agent’s belief, not a fact about the world) takes about twenty minutes to understand and longer to shake.

Nick Bostrom, Anthropic Bias (2002). The most careful treatment of observer-selection effects and the anthropic principle. Dry, rigorous, and it will make you distrust every argument in this space, including the ones in this post.

Tags: physics, philosophy, cosmology, fine-tuning, quantum mechanics, consciousness, Fermi paradox

📺 YouTube: The Unretired Engineer | 🔗 LinkedIn | 📚 Published works — M.A. Harris